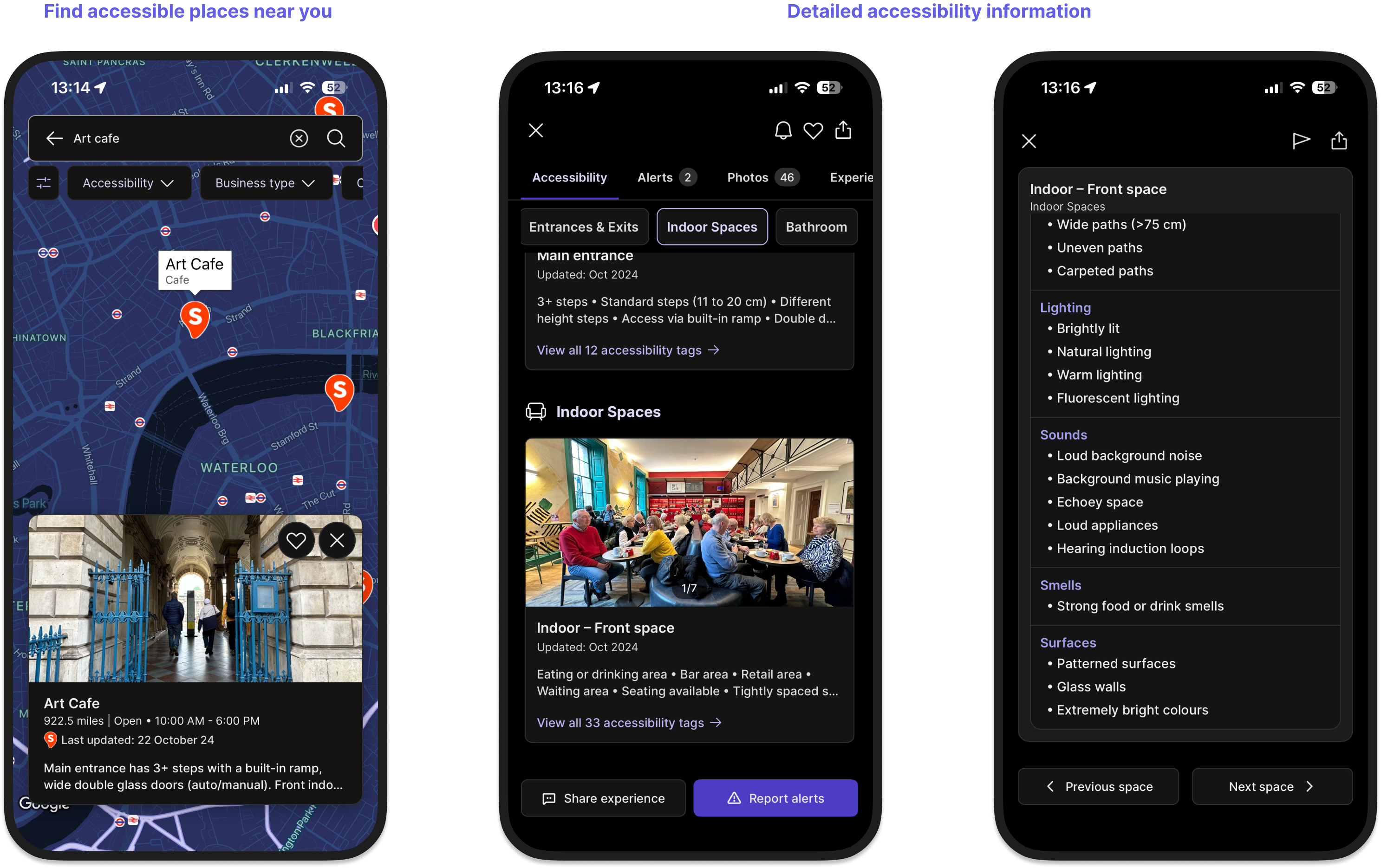

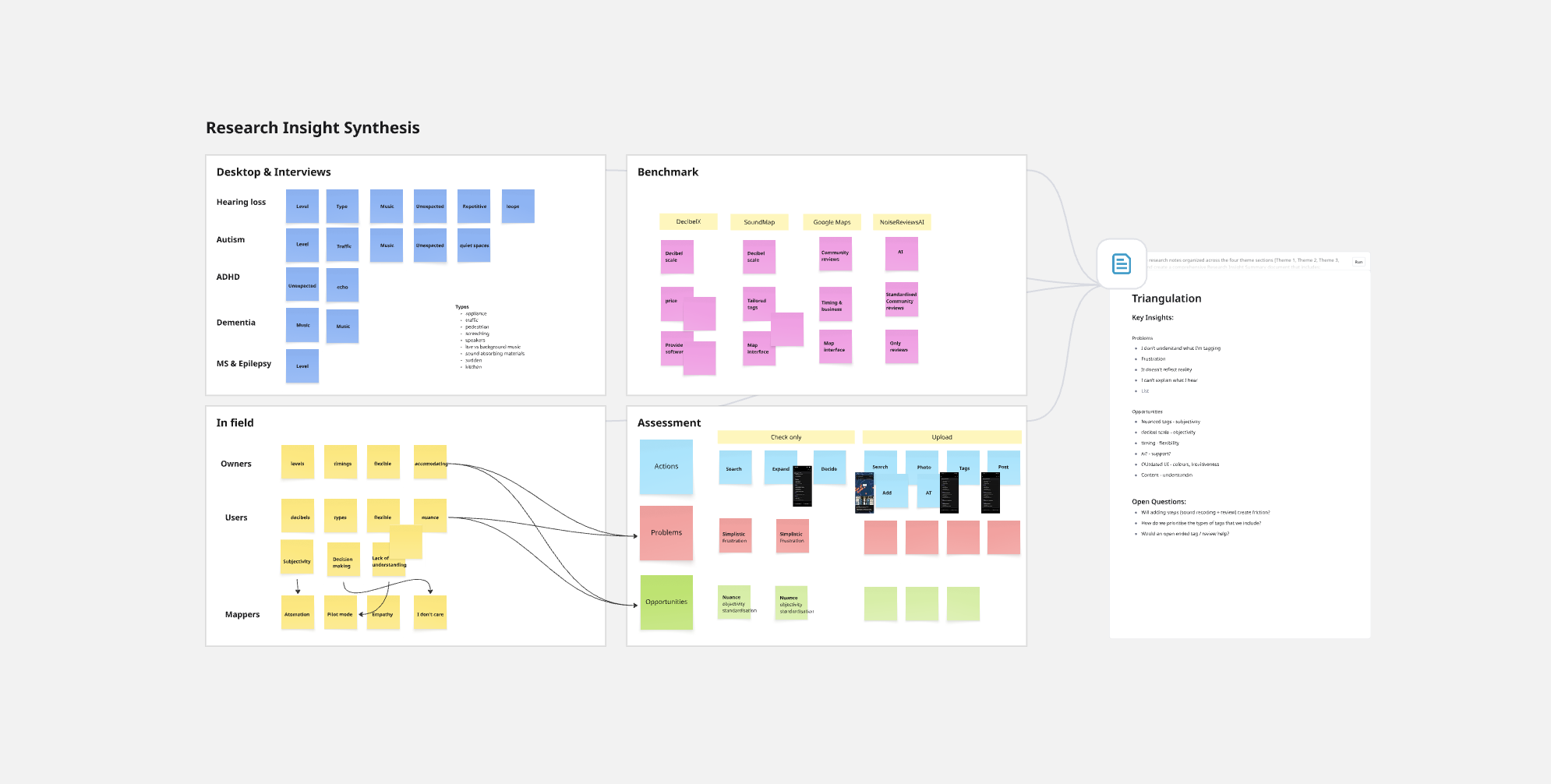

The app provides a range of accessibility factors for venues so that disabled people can plan their day with confidence: from mobility data like measurements, to visual, hearing and sensory (VHS) conditions such as colours, patterns, smells and sounds. While in the field, speaking directly with users and business owners, a pattern kept surfacing: the app's sound data wasn't quite right.

The app offered a binary solution: Loud or Quiet. But a pub that's peaceful on a Tuesday lunchtime is a completely different environment on a Friday evening. For users who might have concrete accessibility needs tied to them (for example, those with hearing conditions, sensory sensitivities, or anxiety) that gap isn't a minor inconvenience, it's the difference between going somewhere and staying home.

Detected challenges

— Sound is inherently difficult to measure, is variable and contextual.

— Lack of trust on sound information by users and lack of satisfaction by business owners about the sound definitions in their venues.

Critically, Sociability's mission is not only to provide that information, but to also make the data collection process itself inclusive and participatory, allowing users to shape the database by contributing their own experiences. There was an opportunity to improve the experience as a whole.

How might we ensure the sound data collected is objective enough to build user confidence, yet flexible and nuanced enough to reflect real contexts?