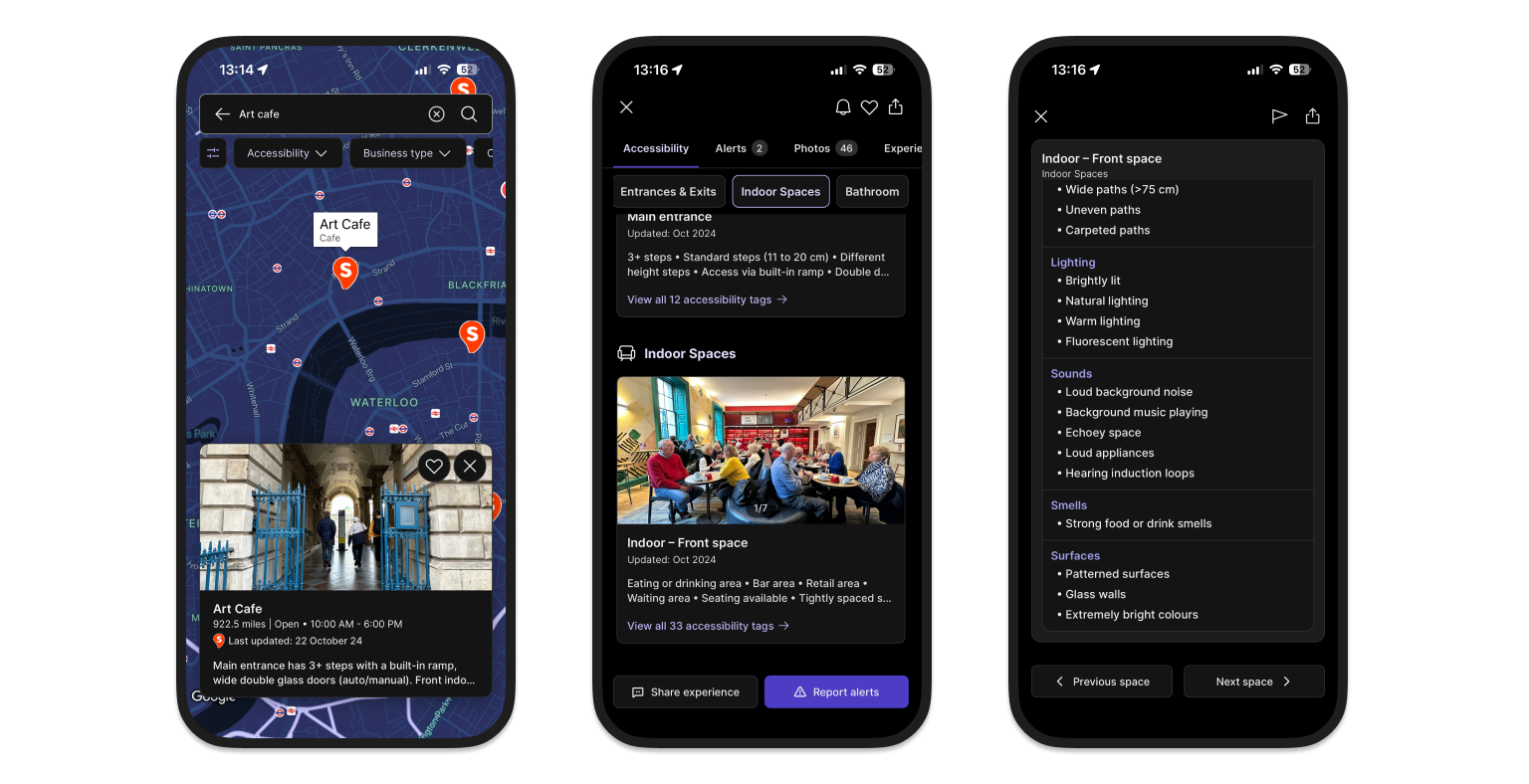

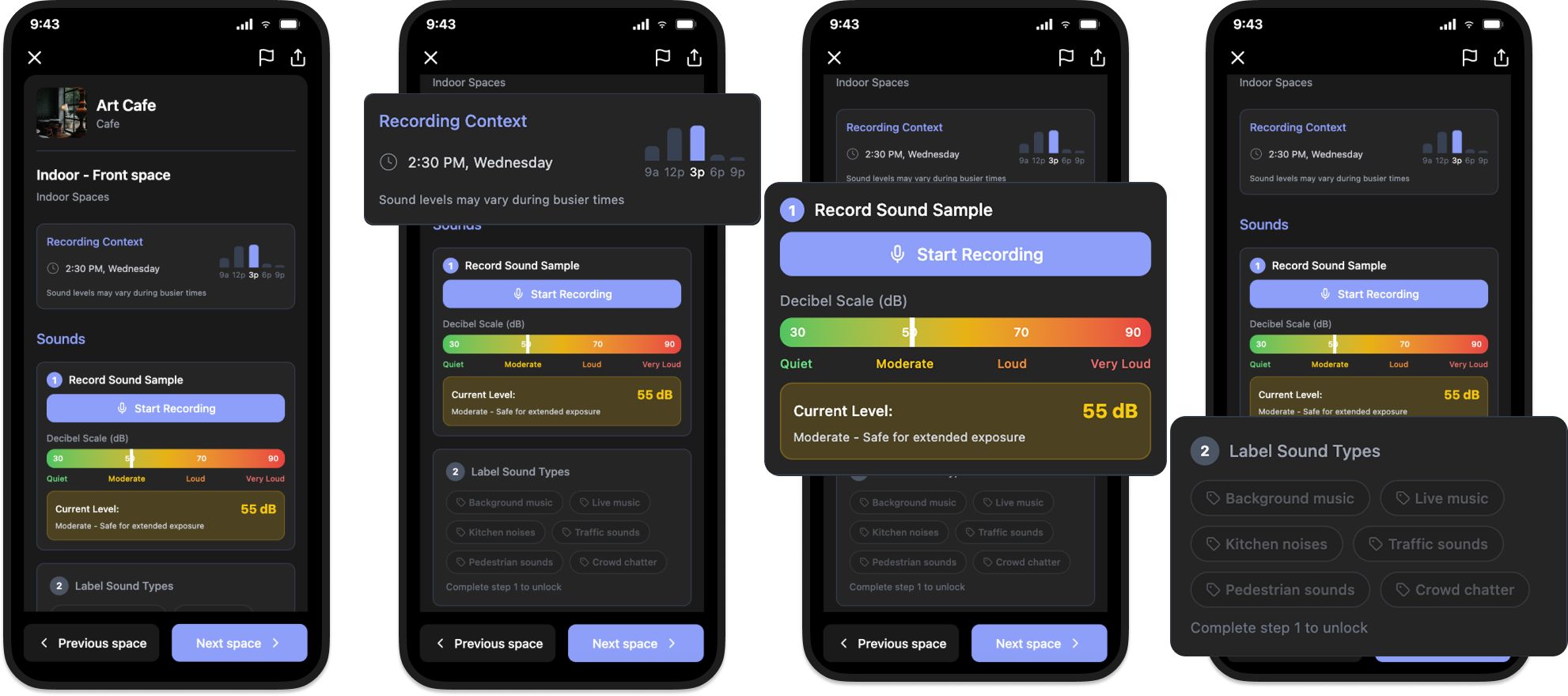

Sociability collects accessibility information across a wide range of factors, from mobility measurements to visual, hearing and sensory conditions such as colours, smells and sounds. During fieldwork with users and venue owners, a recurring issue emerged: the app's sound data was too simplistic to be useful.

Sound was represented with a binary label: Quiet / Loud. But sound environments are highly contextual. A café might be calm in the morning and overwhelming during peak hours. For users with sensory sensitivities, hearing conditions or anxiety, this difference can determine whether a place feels accessible or not.

The current model created two problems:

Detected challenges

— Low user trust in the accuracy of sound information.

— Frustration among contributors who felt the categories misrepresented their venues.

This revealed a deeper product challenge: Sociability's mission is not only to provide that information, but to also make the data collection process itself inclusive and participatory, allowing users to shape the database by contributing their own experiences. There was an opportunity to improve the experience as a whole.

How might we ensure the sound data collected is objective enough to build user confidence, yet flexible and nuanced enough to reflect real contexts?